My BosonAI's hackathon project ranked 2nd place in benchmarking stream - Benchmarking the scalable Audio Higgs Model and Qwen3.2

My BosonAI’s hackathon project ranked 2nd place in benchmarking stream: Benchmarking the scalable Audio Higgs Model + Qwen3.2 ![]()

Project Overview

Problem

When customers call support, they often get forced into a rigid menu (“press 1/2/3…”), even though:

- they don’t know which category their issue belongs to,

- routing takes time,

- scaling human agents is expensive,

- requests can span multiple domains.

Our idea

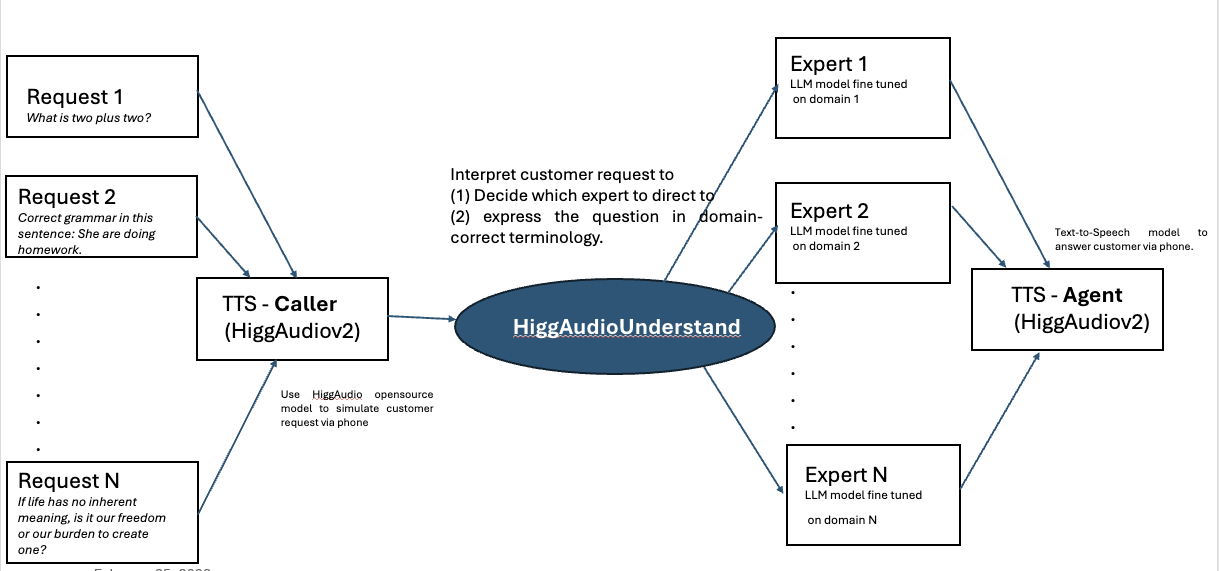

Use audio-first routing:

- synthesize/ingest caller request (TTS caller),

- understand request → decide department + intent,

- dispatch to the right expert model,

- respond as an agent via TTS.

System Diagram

System pipeline: audio request → understanding/router → expert(s) → TTS agent response.

Audio Examples (Caller vs Agent)

Below are paired audio clips (caller request → agent response).

Tip: keep filenames short + lowercase.

Example 1 — Simple QA

Caller: “What is two plus two?”

Agent: short phrase answer

Example 7 — Time question

Caller: “How many minutes are in an hour?”

Agent: short phrase answer

Case Study: Airline Customer Calling Service

Example 0

“Please help me make a new reservation for Toronto.”

Example 35

“Online check-in isn’t working for me.”

Example 56

“Set up UM service for my child.”

Routing taxonomy (skeleton)

We route into:

- Department (BOOKING / CHANGES / REFUNDS / …)

- Intention (NEW_BOOKING / SEAT_SELECTION / …)

Click to expand routing labels

- **BOOKING**: NEW_BOOKING, SEAT_SELECTION, … - **CHANGES**: FLIGHT_CHANGE, NAME_CORRECTION, … - **REFUNDS**: CANCEL_ITINERARY, VOUCHER_QUESTION, … - **BAGGAGE**: ADD_EXTRA_BAGS, LOST_BAGS, … - **FLIGHT_STATUS**: STATUS_REQUEST, DELAY_REASON, … - **CHECK_IN**: ONLINE_CHECKIN, PASSPORT_ISSUE, … - **LOYALTY**: MISSING_MILES, MERGE_ACCOUNTS, … - **PAYMENT**: PAYMENT_FAILED, REQUEST_RECEIPT, … - **SPECIAL_ASSISTANCE**: WHEELCHAIR, SPECIAL_MEAL, … - **GENERAL**: SPEAK_TO_AGENT, OTHER, …Benchmarking: Concurrency

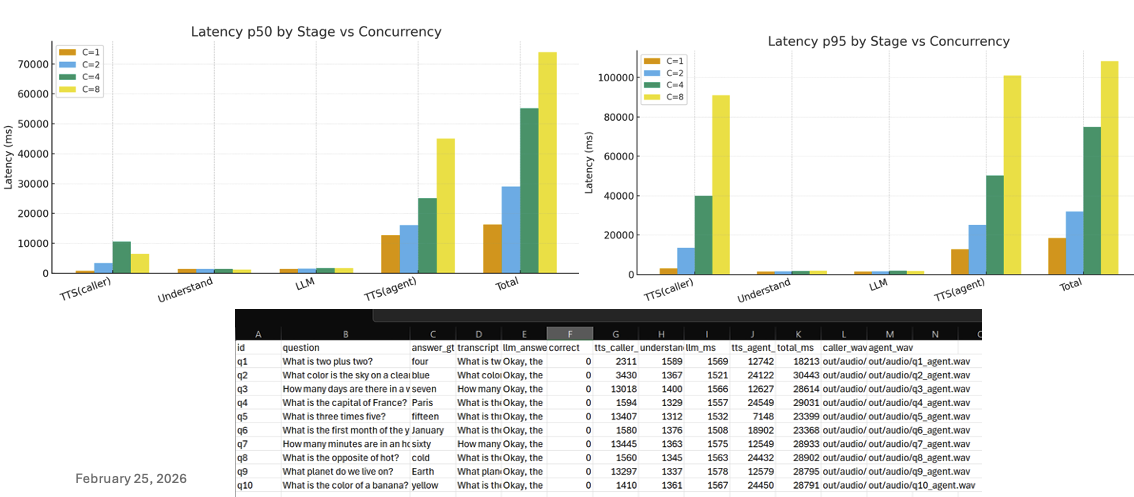

Latency p50/p95 by stage vs concurrency"

Key metrics (fill in)

| Setting | p50 Total Latency (ms) | p95 Total Latency (ms) | Notes |

|---|---|---|---|

| C=1 | TBD | TBD | baseline |

| C=2 | TBD | TBD | |

| C=4 | TBD | TBD | |

| C=8 | TBD | TBD |

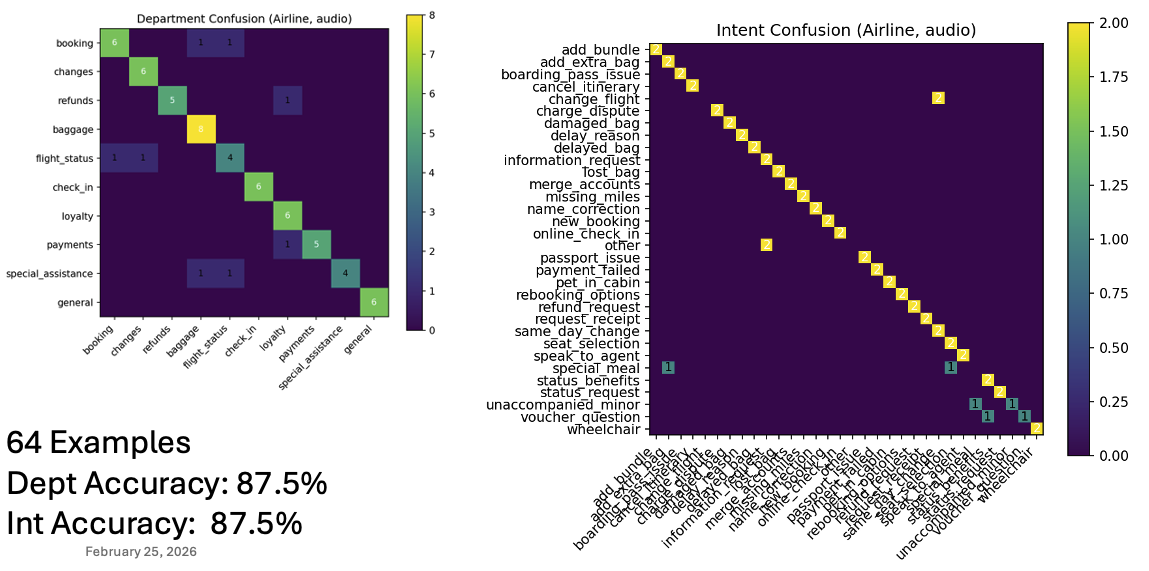

Benchmarking: Small dataset

Confusion matrices on SMALL DATASET (department / intent).

Summary numbers (example)

- Dataset: 64 examples

- Department accuracy: 87.5%

- Intent accuracy: 87.5%

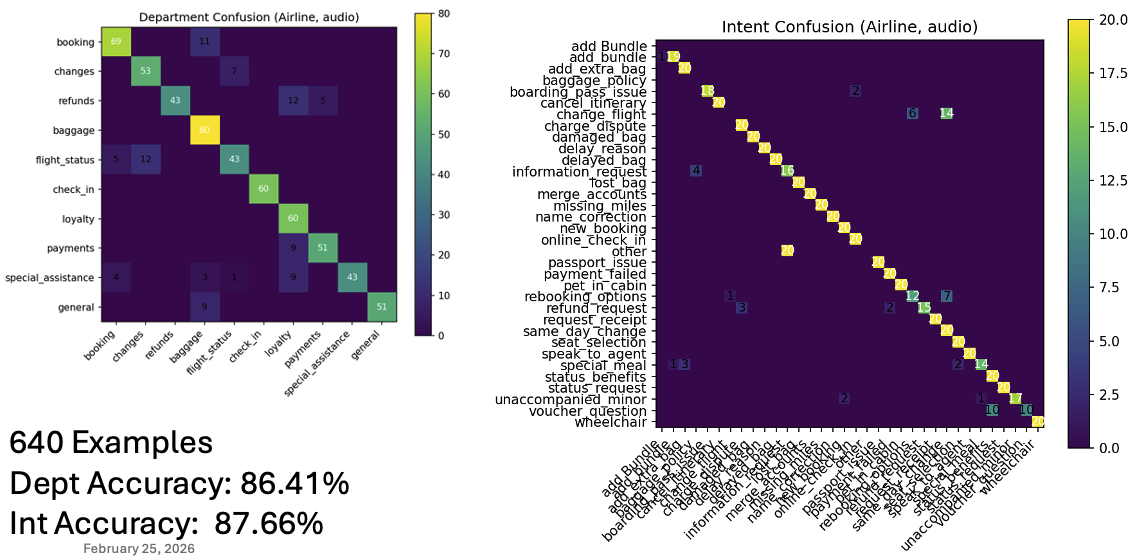

Confusion matrices on LARGE DATASET (department / intent).

Reproducibility

Environment

- TTS caller: HiggAudio v2

- Router: HiggAudioUnderstand + Qwen3.2

- TTS agent: HiggAudio v2

How to run (skeleton)

# 1) install

pip install -r requirements.txt

# 2) run demo

python demo.py --config configs/demo.yaml